Seduction

In the past I've been a big fan of PC watercooling with the hobby reaching its climax with my Thor II build. That one was surely spectacular to look at but it had cost so much and given so few advantages that I had decided to go back to air cooling for 2014's Thor III and put the money that blocks and rads would have cost in the actual components instead. Looking back I still see this choice as a good one, and the only downside of this build was that it has been... boring: Everything worked as intended from day one, I've never had any issues whatsoever and never had to fix or change anything.

So the years passed by and in 2019 the huge impact of AMD's Zen2 architecture launch made me think about planning a new build. I wasn't put under pressure as my current rig was still not causing any issues but it started showing its age in regard of gaming performance and I was very tempted to jump on the AMD train. Unfortunately in mid 2019 I discovered Reddit and very quickly found myself scrolling through the eye candy of r/watercooling. I couldn't resist.

The Naive Approach

So the idea of Thor IV as a new water-cooled rig was born. And it was time to come up with a shopping list.

The Case

The question of which case to go with was very easy this time. I had been very happy with the Enthoo Luxe for Thor III made by Phanteks so I checked their product lineup and stumbled upon the Evolv X, which I instantly fell in love with. With tempered glass side panels, discreet (enough) RGB and nice little premiums like fill-/drainport holes and cable management covering doors it seemed like the perfict fit for a non-overkill (looking at you, LDPC-V8) water-cooled build.

Choosing a CPU

The CPU was a quick one as well: having ditched Gentoo long ago and not doing much encoding or scientific calculations it's been pretty clear that massive core count would not give me any advantage, and even modern games still tend to benefit more from single-thread performance. The R7 3700X was very hard to get so I opted for an R7 3800X, smiling while thinking about how dumb this choice would look to others. I knew very well that you can get almost any 3700X clock up to the 3800X but I wanted to start the build and didn't care about the small extra on the bill.

All-AMD with Navi

For the GPU I chose to go with AMD's new Navi series, for three

reasons: first of all I wanted to support them for their efforts of

bringing back a real competition with Intel. Secondly I liked the idea

of getting rid of the proprietary NVIDIA drivers on Linux, which would

allow me to finally give Wayland a

go and not being greeted by the Kernel telling me it's tainted by the

nvidia module. And lastly, having been an Intel/NVIDIA user for so

long it was interesting to go with something different again.

In order to not get too far behind NVIDIA's RTX series my choice fell on the Radeon RX 5700XT, which would offer enough performance at a good (enough) price point. Looking at numerous benchmarks I learned that there would not be much of a difference in terms of performance between various vendors and, having my watercooling plans in mind, I opted for a cheap card from XFX with reference layout that would fit a nice full-cover block from EK. A choice that would have its consequences...

A Truly Excuisite Motherboard

I had let go of overclocking long ago and learned to love the reliability of a stable system, so I didn't pay too much attention to all the 'Gaming' and 'OC' boards for AM4. At the same time I didn't want to miss the ability to go for PCIe 4.0 storage at some point in the future so I narrowed my scope down to X570 boards. The issue with X570 was that most manufacturers had put an active cooling solution on the chipset, i.e. a fan that could either be noisy or just fail at some point. I didn't want that which cut my options down to basically two boards: the X570 AORUS Xtreme from Gigabyte and a very special board from ASRock: the AQUA. This board is truly exceptional: it is equipped with a massive aluminum block that covers almost the entire front, thermally connecting the integrated CPU block with passive elements for the M.2 slots as well as the chipset and the 10G Ethernet phy. All unobtrusively lit by two strips of RGB LEDs. The thing is limited to 999 units and has its serial no. lasered on the block. Excuisite, I fell in love at first sight.

Storage Plans

My previous rig used SATA SSDs for the operating systems and main data as well as two old 1TB WD Green disks in BTRFS-RAID1 mode for backup and scratch space. For the new build I wanted to get rid of all rotating drives and M.2 NVMe caught me with its simplicity: no need for running SATA power or data cables, all hidden and cooled by the massive motherboard block. PCIe Gen4 was brand new at that point and there was only one SSD from Corsair supporting it, so I opted for PCIe 3.0 with the 1TB ADATA XPG SX8200. My plan was to extend this by another 1TB NVMe SSD in the future that would go into the second M.2 slot.

Cooling

As I was already sold on going back to watercooling I first thought of reusing the equipment from my Thor II build, which included my trusty old Laing DDC-1T Plus pump as well as three 480 radiators. Unfortunately even with all the options that the Evolv X offers in terms of mounting radiators, there is no way of fitting two 140mm rads in there. I also read about most 140mm fans not reaching the same level of static pressure than their 120mm counterparts so I decided to go with two 360 rads. Pretty much all reviews I found recommended Hardware Labs but all of their rads were sold out at that point so I went with ones from EK Water Blocks.

At that point I was already on with a black/silver colored theme that made me think of the equipment from Batman Begins, and my old black nickel reservoir absolutely did not match that, so I decided to take the oportunity to go RGB there as well with the EK-RES X3 250 RGB.

For tubing my first intention was to jump on the hard tube train, but looking at my shopping list I quickly put back that idea and went with soft tubing for the first assembly, keeping hard tubes as an upgrade plan for the future. That allowed me to make use of all my old fittings which turned out to be a substantial item removed from the shopping list.

Shopping List

This is what I ended up with as my initial shopping list:

- Case: Phanteks Evolv X (graphite grey)

- CPU: AMD Ryzen 7 3800X

- GPU: XFX Radeon RX 5700XT

- Motherboard: ASRock X570 AQUA

- Storage: ADATA XPG SX8200 1TB M.2 NVMe SSD

- Radiators: 2x EK-CoolStream PE 360

- Fans: 6x Noctua NF-F12, 1x Noctua NF-A14, all PWM chromax.black.swap

- GPU Block: EK-Vector Radeon RX 5700 +XT D-RGB - Special Edition

- Reservoir: EK-RES X3 250 RGB

- Tube: Tygon black 11/8mm

The following parts were brought over from Thor III to complement the system:

- Memory: 4x 4GB DDR4-2666 Corsair Dominator Platinum

- PSU: beQuiet! Dark Power Pro 850W

Initial Build

It was late November 2019 when all the parts had

finally arrived and everything was ready-for-build. I took two evenings

after work to convert the GPU to watercooling and pre-mount the

radiators in the case and planned to do the rest on a rainy Saturday.

It was late November 2019 when all the parts had

finally arrived and everything was ready-for-build. I took two evenings

after work to convert the GPU to watercooling and pre-mount the

radiators in the case and planned to do the rest on a rainy Saturday.

Assembly went relatively smooth, except for the fact that I was very happy about having postponed the idea of hard tubing: the bends would have been extremely hard to do, especially for a rigid tubing beginner like me. In fact I was having trouble getting everything connected even with soft tubes. Realizing that a particular connection would not work out and then taking apart the work of the past two hours again and again made me think of the old rule that a loop never ends up as designed on paper.

At the end of said Saturday I was ready to press the power button and... it sort of worked. POST took exceptionally long but I already knew of this Ryzen speciality of extended memory training. I was greeted by a BIOS that felt a bit stuttery and offered loads of options that I had never seen before and that were entirely undocumented in the manual, so I left most of them untouched.

In contrast to numerous exotic features there was something missing that I had expected to be there for sure: the option for turning the motherboard RGB lighting on and off with the system. There was indeed an option for the RGB LEDs but setting it to on made them stay on even when the system was powered down and setting it to off kept them off even with a running system. How stupid! So you either light up your room at night or you can't make use of the RGB lighting at all. Great.

Another thing I noticed was that my NVMe SSD was not being recognized which was very unfortunate for the upcoming operating system installation. A couple of reboots later I had found out that the system would require multiple cold starts (with the power cable unplugged in between) to recognize the SSD. Very annoying, but it didn't stop me from proceeding with the Arch installation.

Said installation went fine and my first impressions of

sway as the closest substitute of

awesome for

wayland (I do know of Way

Cooler but that one seems

discontinued) were positive. So I went on installing Windows 10 as my

secondary OS for gaming. This looked good as well up to the point when

I installed the AMD Adrenaline drivers for the RX 5700XT which caused

the system to reset instantly. After that I couldn't even get to login

anymore, Windows either BSOD'd immediately

(VIDEO_SCHEDULER_INTERNAL_ERROR) or provided me with an absolute

masterpiece of abstract visual arts on screen.

Searching the net I found multiple reports about the incredibly bad drivers AMD was shipping at that time, but not a single one described the pixel arts I was seeing, and DDU'ing the drivers and re-installing them did not help either. So I feared that I had done something terribly wrong when converting my GPU to watercooling, e.g. overtightening some of the screws or using the wrong thermal pads. I re-seated the block and ordered another set of pads to make absolutely sure that I wasn't mixing them up. Unfortunately that didn't help. Just when I was about to put the poor card in my kitchen oven to attempt a crazy variant of reflow (or reball) I discovered that the card was working fine when limited to PCIe Gen2 link speed via BIOS.

I had expected the card to fail

running in Gen4 mode as the riser cable I was using wasn't certified

for that bandwidth, but I could not find any reports of risers of so

poor quality that they wouldn't even make Gen3, and the one I was using

wasn't exactly cheap. So that was quite a showstopper. Benchmarking

showed that the performance impact of PCIe Gen3 bandwidth wasn't huge

but still visible, and requiring such compromises right from the start

of a new build just didn't feel right. At least I had video output

again which allowed me to continue setting up the system.

I had expected the card to fail

running in Gen4 mode as the riser cable I was using wasn't certified

for that bandwidth, but I could not find any reports of risers of so

poor quality that they wouldn't even make Gen3, and the one I was using

wasn't exactly cheap. So that was quite a showstopper. Benchmarking

showed that the performance impact of PCIe Gen3 bandwidth wasn't huge

but still visible, and requiring such compromises right from the start

of a new build just didn't feel right. At least I had video output

again which allowed me to continue setting up the system.

Replacing Parts

Trying to switch over to the new system as my daily driver the NVMe issue got worse and worse. The system required 6-9 power cycles to come up with the SSD recognized and otherwise either got stuck with a random POST code, didn't recognize the SSD or seemed to run the bootloader without any video output on either of the screens.

The SSD was not contained in the official Storage QVL for the board so I quickly sent it back (lucky to be just within the two weeks of return window) and bought myself a Crucial P1 1TB. This one was technically not listed either but at least its smaller variants were, so I thought this was safe enough. While I could only tell from successful POSTs with video, the P1 seemed to get recognized much better than the SX8200, so I called that an improvement. Unfortunately the issue wasn't entirely solved and quickly started annoying me again so I continued my research.

The way NVMe works made me have a look at the memory modules I was using. I learned that Ryzen can be a bit picky whith slow modules so I decided to replace the Corsair Dominator Platinum DDR4-2666 modules I had re-used from Thor II by some proper, more Ryzen-friendly DIMMs, the G.Skill Trident-Z Neo DDR4-3600.

Unfortunately the new memory did not change anything so I reached out once more for the endless wisdom of Reddit to gather opinions on which component to swap next. While waiting for feedback I got in touch with ASRock tech support to clarify wheter one of the undocumented settings could be causing my trouble (there were multiple options regarding PCIe signal amplification, called PCIe Redriver). Their NL department responded fairly quickly and after the usual round of BIOS update ping-pong they suggested to simply RMA the board, which I did. The shop I had bought the board from was even kind enough to test my CPU along with the replacement just to make sure that one is okay as well.

It was mid-December and I went on a skiing trip while the components were in RMA. The process did not take very long so I had a new board and my tested CPU back in hands when I came back from Austria. Unfortunately things were not better in any way with the new board and this build started to make me really frustrated. One of the most frustrating things was the fact that a friend of mine had just finished his (air cooled) Ryzen build and had not experienced any issue at all. So I asked him about his components and took a shot in the dark by ordering his exact same SSD, the Samsung 970 EVO 1 TB. Not expecting much to change I was surprised when testing it: it seemed to get recognized every single boot. Unbelievable, the first real fix after more than two months of trial-and-error! Unfortunately it was too late to send back the Crucial P1 so I had to fall back to an option that I hadn't used for years: eBay. Luckily I had no issues getting it sold, and I even made a small amount of profit compared to the original price.

Switching the GPU

Back working on the GPU issues I found out that my old GTX 980 did not have any issues running in the new system, even in PCIe Gen3 mode and with the riser extension I was using. Having read multiple reviews of the 5700XT reference design that complain about poor design choices I was convinced to finally get rid of that XFX card I had bought and started looking for alternatives. Just at that time the PowerColor RX 5700XT Liquid Devil got released and it was being titled 'as good as Navi can get' so I was hooked, again. After placing my order I quickly put the XFX card on eBay for sale.

Unboxing the Liquid Devil I was charmed: that card was the most beautiful graphics card I had seen in a long time, just gorgeous! So I re-assembled the loop and fired it up. Well, my pixel art issues went away but the system still required multiple attempts to POST with video output, and still occasionally got stuck during POST with random Debug codes.

Away with the AQUA

At that point it had turned February, I had enough of

the AQUA and ordered the second option I had identified in the

beginning: the Gigabyte X570 AORUS Xtreme. The AQUA, you guessed

it, went on eBay. Unfortunately it didn't get close to the initial

price, but I was just relieved to have ended our

love-hate-relationship. In order to cool my CPU I ordered an

EK-Velocity AMD RGB Nickel+Acryl block, as the new board would not

have a monoblock.

At that point it had turned February, I had enough of

the AQUA and ordered the second option I had identified in the

beginning: the Gigabyte X570 AORUS Xtreme. The AQUA, you guessed

it, went on eBay. Unfortunately it didn't get close to the initial

price, but I was just relieved to have ended our

love-hate-relationship. In order to cool my CPU I ordered an

EK-Velocity AMD RGB Nickel+Acryl block, as the new board would not

have a monoblock.

Having to re-assemble the loop for the CPU block I took the opportunity to re-plan the entire thing and make it a bit less tinkery: I ordered some nice silver Alphacool fittings to replace the Phobya black nickel ones I was using. I had never had a single leak with them, but they were in their 10th year of service so it was just a question of time for the first o-ring to give up. I also bought a proper mount to put my reservoir on a fan instead of having it clamped to the drive bay covers.

The optics of the new board fortunately didn't interfere with my mostly black and silver colour theme and I was quite happy with the way the new loop layout had worked out. The system POSTed instantly and most of the GPU issues I had experienced before were gone, but I was quickly greeted by new trouble: the new GPU was stuck at 300 MHz core clock when using the 2020 version of the Adrenaline driver. Luckily there was an updated vBIOS available for the card at PowerColor's DevilClub which I installed and... nothing happened, still locked at 300 MHz. A tech support ticket later I had learned that the current AMD drivers were so buggy that they even fail silently to overwrite some left-overs from a previous driver version so I had to use DDU again to perform another clean installation of the Adrenaline driver. This time it worked, unlocking the card to its full potential.

At that point the new build had reached a level of usability that I switched over to it as my daily driver and almost got used to the remaining issue of requiring multiple power-ons to POST.

GPU Issues Getting Worse

After almost a month the issue suddenly got worse: the system required more and more power-ons for a successful POST, i.e. a POST with video output, and while messing around with different video configurations I got another really annoying issue: The RTC of the board seemed to reset itself to the year 2920 each time the system was shut down.

At first I didn't notice this as I was mainly booting Linux, but when I tried to get into Windows system settings in order to disable Fast Boot I noticed that I couldn't reach a single website (due to pseudo-expired SSL/TLS certificates). Windows didn't even manage to synchronize the system clock as the offset was so high.

Cell voltage for the RTC backup battery looked good (at least telling from the hardware monitoring sensors) and unfortunately with that board you cannot replace it without a considerable amount of disassembly (the board's front armor needs to be removed). So I sat back and convinced myself to try another RMA run, this time with the motherboard, as the RTC issue would definitely be on that side.

When I had the board in hands making it ready for packaging I realized what would have caused the RTC issue: a pin of the USB 3.1 front panel extension header was seriously bent and shorted two other pins. I didn't look at the pinout as for what exactly was shorted there but this wasn't good for sure. After bending it back I decided to send the board in RMA anyway, both to make sure there was no permanent damage and because of the other issues I was having.

The process didn't take long and I got the board back with a note stating that it had been tested and no issues had been found. I wasn't sure whether to call this a step forward, but at least I had my confirmation that the USB short had not done permanent damage.

When re-assembling the loop this time I forced myself to make smaller steps: I put the (insanely loud) Wraith Prism stock blower cooler on the CPU and fired the system up with my GTX 980 to confirm that motherboard and memory were working fine. Then I put in my riser extension to make sure it would not fail in PCIe Gen3 mode. After that I dared to follow another recommendation I got via Reddit: firing up the LiquidDevil without a watercooling loop assembled. That required a bit of preparation (uninstalling the NVIDIA driver first) to minimize the time the card would run without proper cooling. Temperatures were impressively low, I guess a decent water block simply has a huge thermal capacity. Then I was hit by two blows at once: the card still required multiple tries to POST with video output and when switching to the latest driver (that was supposed to fix a lot of the issues people were complaining about with Navi) I finally got that one issue everybody else had to cope with: losing video when launching games (or benchmarks).

Goodbye to Navi: Switching the GPU... again

At that point I really had enough. I was frustrated enough to break with the goal of having a system without proprietary NVIDIA drivers (yes I do know of nouveau but it's just not good enough in terms of power management and even for playing CS:GO on Linux once in a while). I just wanted a system that works. A system that is stable and that I can rely on. A system that doesn't trouble me.

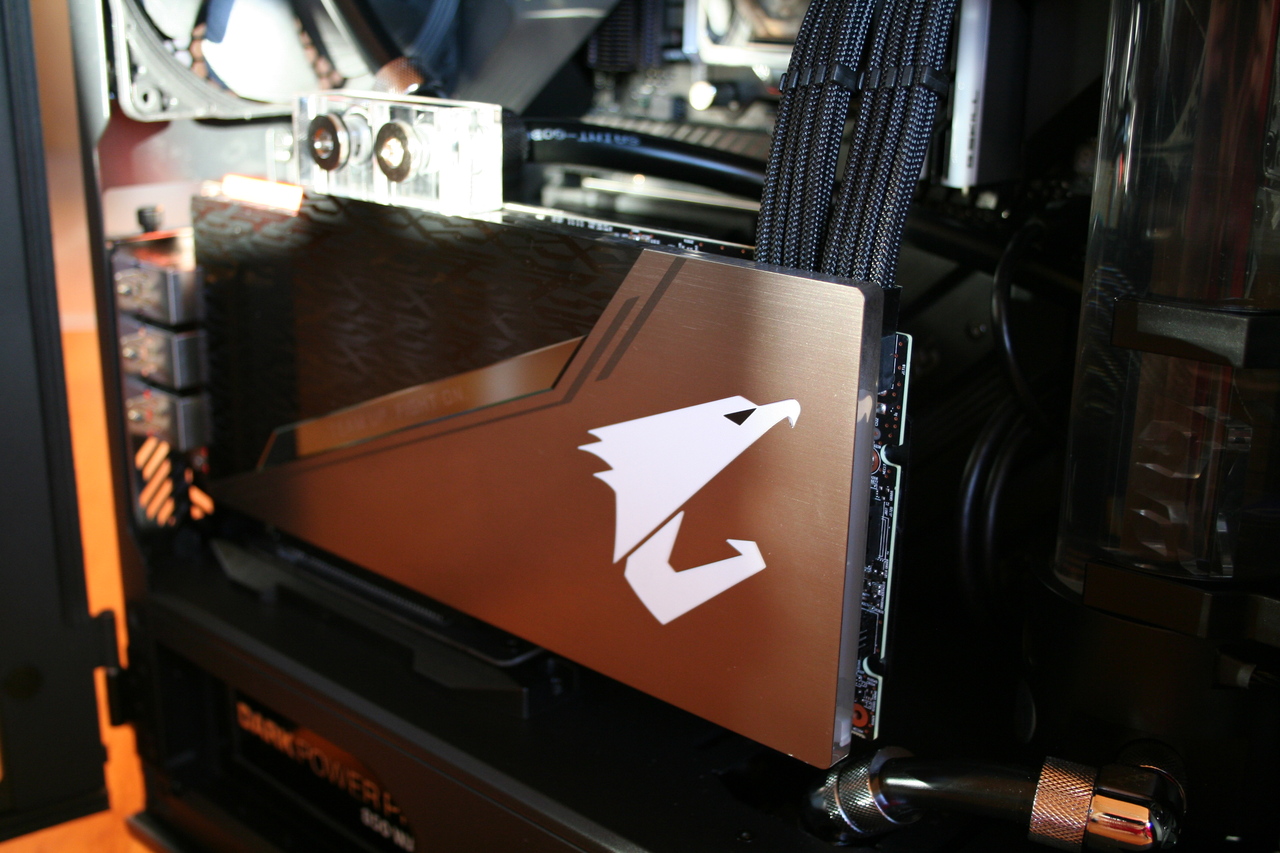

So I made the jump and bought a Gigabyte AORUS GeForce RTX 2080 SUPER WATERFORCE WB 8G (did I mention that I already hate Gigabyte's tendency towards all-caps product names?). I quickly settled on an RTX 2080 Super as I didn't want a downgrade in performance and searched for cards with waterblock preinstalled. So it came down to either the Gigabyte card or the FTW3 Hydro Copper Gaming from EVGA. While my past two cards had both been EVGA GeForce cards (and the second-last one even been of the Hydro Copper series) I couldn't justify the substantial extra charge compared to the Gigabyte card, especially as the factory overclock was almost identical.

I must admit I wasn't pleased by the looks of the AORUS RTX but at least the decals would match my motherboard perfectly. When I had the card in hands my first impression was confirmed: (in my eyes) it looks much cheaper than it really is with its acrylic front completely covered by a monotonic dark sticker and flashy orange rubber protectors for both the DP/HDMI ports at the slot bracket and the NVLink fins. Nothing compared to the asthetical pleasure of the LiquidDevil. So it wouldn't be love at first sight for sure.

When installing the card I originally wanted to do the same iterative approach as with the previous assembly: testing it first without the watercooling loop assembled and without the riser cable. The latter, to my shocking, didn't work as the card was simply too long to fit in horizontal orientation. It's really ridiculously long, with the block cover extending even past the PCB. So I jumped directly to vertical testing.

When pressing the power button I made myself the promise that I would put the LiquidDevil on eBay that same day if the black screen issues would be gone with the NVIDIA card. And what shall I say... that just happened. From the moment I put in the RTX 2080 SUPER all the trouble was just gone.

State of the Art

At the time of writing this none of the issues have re-occured. The system continued to POST with video output and never failed to recognize the NVMe SSDs.

My Personal Lessons Learned

- My next build will be air-cooled. Period. Sure, water-cooled rigs are sexy, and if you know what you're doing and plan things ahead the build can go much easier than in the early days of the hobby. But as soon as something goes wrong and you need to replace parts (even just for analysis) everything is just so much more complicated and expensive (in terms of both time and money) than with an air-cooled system. I shall withstand the seduction of r/pcwatercooling next time.

- Have a backup system at hand. Normally when I build a new system I re-use some parts of the old one (e.g. the PSU). This implies that once I start building I don't have a workstation in an operating state anymore, which tends to make me nervous and online research fiddly in case of complications. This time I got an extra PSU so I had the luxury of keeping my old rig running until the new one was ready. Much more relaxing.

- AMD excels with Ryzen but still needs to sort out some issues with Navi. In more than 10 years with NVIDIA GPUs I had not a single faulty driver install. AMD (at least with Navi) is levels away from this experience.

- eBay can be the building fool's friend. I almost never managed to stay within the two weeks of return window for parts. Putting them up for auction has turned out to be a good way of getting refund. Although you still need the money to pay the auction fee and the price difference, of course.

- A drain port is essential. Being able to flush the loop within a minute makes working on it so much easier. Never had one before, never going to go without one again.

- Have enough coolant ready for multiple fill-ups. In the past I always bought pre-mixed coolant as I didn't want to risk fallout gunking up my loop. This time I stuck to just distilled water, a biocide and a corrosion inhibitor as additives. This way I can easily drain and re-fill the loop many times without the need of buying something (online and then waiting for the delivery). This lowers the hurdle of doing maintenance or changing parts for analysis.